Toronto-based startup SwiftAI is making waves with its recent announcement of a new method to train large language models (LLMs) using the Swift programming language, promising to enhance computational efficiency from gigaflops to teraflops per second. This development could potentially shake up the AI training landscape, especially for those who have been reliant on more conventional languages like Python. But as always, the question remains: does this innovation deliver tangible benefits, or is it just another blip in the AI hype cycle?

## What SwiftAI Does

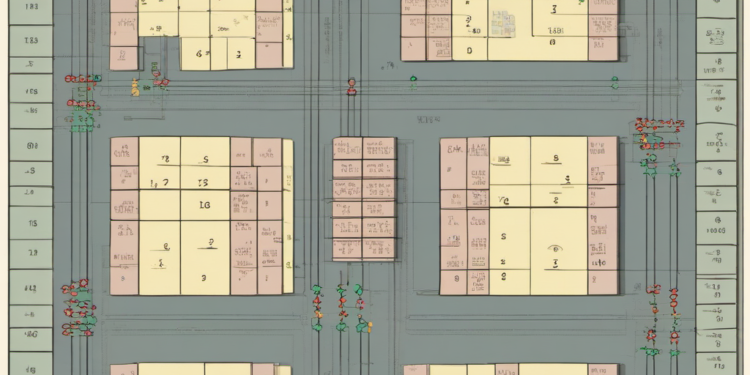

SwiftAI claims to have developed a way to leverage Swift, primarily known for iOS app development, to train LLMs at speeds previously unattainable with traditional setups. By optimizing matrix multiplication—a core component of AI model training—the company asserts it can boost performance from gigaflops per second (Gflop/s) to teraflops per second (Tflop/s). This leap in performance is achieved by harnessing Swift’s optimized memory management and parallel processing capabilities, which are often overlooked in AI applications.

The company, founded by a group of former Apple engineers, argues that Swift’s strong type safety and fast execution speed can offer a more efficient pipeline for AI model training. This could potentially reduce the time and cost associated with developing complex models, making high-level AI more accessible to smaller firms with limited resources.

## Competitive Context

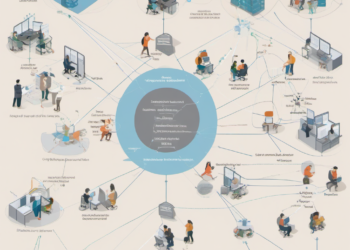

SwiftAI’s approach enters a crowded field dominated by established frameworks like TensorFlow and PyTorch, both of which are deeply entrenched in the AI community. These platforms have benefitted from years of development and a robust ecosystem of tools and libraries. SwiftAI’s challenge will be to convince developers and data scientists to adopt Swift for AI tasks, a language traditionally not associated with machine learning.

Moreover, the AI training space is buzzing with numerous innovations aiming to improve efficiency, from Google’s TPUs to custom silicon solutions by startups like Graphcore. SwiftAI’s reliance on a software-based solution could be a double-edged sword; while it avoids the need for specialized hardware, it also competes directly with highly optimized software stacks that have a strong foothold in the industry.

## Real Implications for Founders and Engineers

For startup founders and engineers, SwiftAI’s approach presents both opportunities and challenges. On the one hand, the potential cost savings and efficiency improvements could democratize access to AI capabilities, enabling smaller companies to compete with tech giants. On the other hand, the shift to a new programming language for AI development requires investment in retraining and potentially retooling existing systems.

Engineers might appreciate the potential increase in productivity SwiftAI offers, but they will need to weigh this against the learning curve associated with adopting Swift for a purpose it was not originally designed for. The true test will be whether SwiftAI can provide enough compelling evidence of its benefits to warrant a shift in a field that often values stability and familiarity over novelty.

## What Happens Next?

SwiftAI plans to release its framework to the public within the next six months, aiming to build a community around its toolset. For those in the tech industry, this means a period of observation and experimentation. Founders and engineers should consider piloting SwiftAI’s framework in controlled environments to assess its practicality and performance claims. Investors, meanwhile, will want to keep a close eye on adoption rates and feedback from early users to gauge whether SwiftAI can carve out a niche in the competitive AI training market.