OpenAI’s ChatGPT has been found to have collected “vast amounts” of Canadian data without proper consent, according to a three-year investigation by the federal privacy commissioner and officials in British Columbia, Quebec, and Alberta. This revelation raises significant privacy concerns and questions about the ethical handling of personal information by AI technologies. As AI continues to permeate our daily lives, the challenge of balancing innovation with privacy rights becomes increasingly apparent.

## What ChatGPT Actually Does

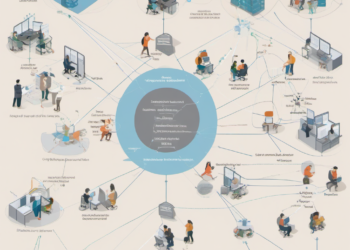

ChatGPT, developed by OpenAI, is a sophisticated AI language model capable of generating human-like text based on user prompts. It can perform a range of tasks, from answering questions and drafting emails to creating content and providing customer support. The technology relies on a vast dataset to function effectively, learning language patterns and contextual clues from the information it processes.

While the utility of ChatGPT is evident, the methods used to gather and process data are now under scrutiny. The report from Canadian privacy authorities indicates that OpenAI collected data without obtaining explicit consent from individuals, a violation of Canadian privacy laws. This raises important questions about the transparency and accountability of AI systems that rely heavily on data mining.

## Competitive Context

In the highly competitive AI landscape, companies like OpenAI are in a race to develop the most advanced and capable models. Competitors such as Google’s Bard and Meta’s LLaMA are also vying for dominance, each with their own approach to data collection and model training. However, the lack of clear regulatory frameworks globally has allowed some companies to operate in a legal grey area, prioritizing rapid development over privacy considerations.

The competition is not merely about technological prowess but also about public trust. As users become more aware of data privacy issues, they may gravitate towards platforms that offer greater transparency and control over personal information. This could potentially impact market share and the adoption rate of AI applications.

## Real Implications for Founders, Engineers, and the Industry

For founders and engineers, the findings of this investigation highlight the critical importance of integrating privacy considerations into the development cycle of AI products. Startups and tech companies must prioritize data protection from the outset to avoid legal repercussions and maintain user trust.

The Canadian investigation serves as a cautionary tale, emphasizing the need for robust privacy policies and transparent data practices. Engineers developing AI systems should focus on building models that minimize data collection or employ techniques such as differential privacy to protect user information.

For the broader industry, this development might prompt a shift towards more stringent regulatory oversight. Companies may need to adapt quickly, reassessing their data handling practices to ensure compliance with evolving laws. This could drive a wave of innovation in privacy-preserving technologies, as businesses seek to balance data utility with legal and ethical obligations.

## What Happens Next

The report from Canadian privacy authorities is likely to catalyze further discussions and potential regulatory action regarding AI and data privacy. OpenAI will need to address these findings and potentially revise its data collection practices to align with Canadian laws.

For founders and engineers, this situation underscores the importance of staying informed about privacy regulations and integrating compliance into product development. Investors should also pay attention to how companies address these challenges, as regulatory compliance could become a critical factor in evaluating the viability and sustainability of AI ventures.

In essence, this development is a wake-up call for the entire tech industry, urging a reevaluation of how data is collected, used, and protected in the age of AI.