The introduction of Agent-skills-eval, a new tool designed to evaluate the effectiveness of agent skills on outputs, is making waves in the tech community. Developers and product managers are keenly interested in determining whether enhancing agent skills can truly improve the performance of AI-driven applications. The tool’s emergence highlights the growing need for practical metrics to assess AI capabilities beyond theoretical promises.

## What Agent-skills-eval Does

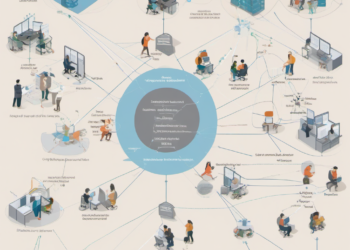

Agent-skills-eval is a software tool that allows users to test and measure whether specific skills embedded in AI agents lead to better task execution. Primarily targeting AI developers and researchers, it provides a structured framework to evaluate how skills affect an agent’s performance in various scenarios. By quantifying the impact of skills, the tool aims to offer insights into optimizing AI systems for real-world applications.

The tool is particularly useful for those working with complex AI systems where multiple skills might interact. It can help identify which skills are contributing positively or negatively to task outcomes. This functionality is critical as the AI industry continues to grapple with the challenge of making AI systems more reliable and efficient.

## Competitive Context

Agent-skills-eval enters a crowded market of AI evaluation tools, each promising to offer unique insights into AI system capabilities. However, while many existing tools focus on general performance metrics, Agent-skills-eval distinguishes itself by honing in on the skills aspect of AI agents. This specialization could carve out a niche for the tool, provided it delivers on its promise of actionable insights.

Despite its focused approach, the tool faces stiff competition from established platforms like OpenAI’s evaluation suite and Google’s AI performance tools. These giants bring robust ecosystems and massive data resources to the table, potentially overshadowing newcomers. However, Agent-skills-eval’s targeted evaluation approach may appeal to smaller teams looking for specific insights without the overhead of larger platforms.

## Real Implications for Founders, Engineers, and the Industry

For founders and engineers, Agent-skills-eval represents both a challenge and an opportunity. The tool raises the bar for AI development by emphasizing the need for skill-specific evaluation, something that could lead to more nuanced and effective AI solutions. Engineers might find themselves needing to develop new skill sets focused on the integration and assessment of agent skills, rather than just overall system performance.

From an industry perspective, the tool could push for more transparency in AI development processes. As companies seek to prove the effectiveness of their AI solutions, tools like Agent-skills-eval could become part of standard practice in demonstrating system capabilities. This shift could lead to more reliable AI applications, benefiting end-users who are often left questioning the real-world utility of AI systems.

The introduction of this tool could also influence investment trends. Investors, always on the lookout for startups with measurable metrics, might find companies that utilize Agent-skills-eval more attractive. The ability to showcase quantifiable improvements in AI performance could become a key differentiator in the competitive AI landscape.

## What Happens Next

As Agent-skills-eval gains traction, its adoption will likely hinge on its ability to produce tangible results for early users. For founders and engineers, the tool’s promise lies in its potential to refine AI development practices, pushing the industry toward more skill-driven evaluation methods. Investors might find themselves increasingly drawn to companies that prioritize such metrics, signaling a shift in how AI solutions are assessed and valued.